6.5. Enabling High Availability¶

High availability keeps Acronis Cyber Infrastructure services operational even if the node they are located on fails. In such cases, services from a failed node are relocated to healthy nodes according to the Raft consensus algorithm. High availability is ensured by:

- Metadata redundancy. For a storage cluster to function, not all but just the majority of MDS servers must be up. By setting up multiple MDS servers in the cluster you will make sure that if an MDS server fails, other MDS servers will continue controlling the cluster.

- Data redundancy. Copies of each piece of data are stored across different storage nodes to ensure that the data is available even if some of the storage nodes are inaccessible.

- Monitoring of node health.

To achieve the complete high availability of the storage cluster and its services, we recommend that you do the following:

- deploy three or more metadata servers,

- enable management node HA, and

- enable HA for the specific service.

Note

The required number of metadata servers is deployed automatically on recommended hardware configurations; Management node HA must be enabled manually as described in the next subsection; High availability for services is enabled by adding the minimum required number of nodes to that service’s cluster.

On top of highly available metadata services and enabled management node HA, Acronis Cyber Infrastructure provides additional high availability for the following services:

- Admin panel. If the management node fails or becomes unreachable over the network, an admin panel instance on another node takes over the panel’s service so it remains accessible at the same dedicated IP address. The relocation of the service can take several minutes. Admin panel HA is enabled manually along with management node HA (see Enabling Management Node High Availability).

- Virtual machines. If a compute node fails or becomes unreachable over the network, virtual machines hosted on it are evacuated to other healthy compute nodes based on their free resources. The compute cluster can survive the failure of only one node. By default, high availability for virtual machines is enabled automatically after creating the compute cluster and can be disabled manually, if required (see the Configuring Virtual Machine High Availability).

- iSCSI service. If the active path to volumes exported via iSCSI fails (e.g., a storage node with active iSCSI targets fails or becomes unreachable over the network), the active path is rerouted via targets located on healthy nodes. Volumes exported via iSCSI remain accessible as long as there is at least one path to them.

- S3 service. If an S3 node fails or becomes unreachable over the network, name server and object server components hosted on it are automatically balanced and migrated between other S3 nodes. S3 gateways are not automatically migrated; their high availability is based on DNS records. You need to maintain the DNS records manually when adding or removing S3 gateways. High availability for S3 service is enabled automatically after enabling management node HA and creating an S3 cluster from three or more nodes. An S3 cluster of three nodes may lose one node and remain operational.

- Backup gateway service. If a backup gateway node fails or becomes unreachable over the network, other nodes in the backup gateway cluster continue to provide access to the chosen storage backend. Backup gateways are not automatically migrated; their high availability is based on DNS records. You need to maintain the DNS records manually when adding or removing backup gateways. High availability for backup gateway is enabled automatically after creating a backup gateway cluster from two or more nodes. Access to the storage backend remains until at least one node in the backup gateway cluster is healthy.

- NFS shares. If a storage node fails or becomes unreachable over the network, NFS volumes located on it are migrated between other NFS nodes. High availability for NFS volumes on a storage node is enabled automatically after creating an NFS cluster.

Also take note of the following:

- Creating the compute cluster prevents (and replaces) the use of the management node backup and restore feature.

- If nodes to be added to the compute cluster have different CPU models, consult Setting Virtual Machines CPU Model.

6.5.1. Enabling Management Node High Availability¶

To make your infrastructure more resilient and redundant, you can create a high availability configuration of three nodes.

Management node HA and compute cluster are tightly coupled, so changing nodes in one usually affects the other. Take note of the following:

- Each node in the HA configuration must meet the requirements to the management node listed in the Installation Guide. If the compute cluster is to be created, its hardware requirements must be added as well.

- If the HA configuration has been created before the compute cluster, all nodes in it will be added to the compute cluster.

- If the compute cluster has been created before HA configuration, only nodes in the compute cluster can be added to the HA configuration. For this reason, to add a node to HA configuration, add it to the compute cluster first.

- If both the HA configuration and compute cluster include the same three nodes, single nodes cannot be removed from the compute cluster. In such a case, the compute cluster can be destroyed completely, but the HA configuration will remain. This is also true vice versa, the HA configuration can be deleted, but the compute cluster will continue working.

Note

The compute cluster must have at least three nodes to allow self-service users to enable high availability for Kubernetes master nodes.

To enable high availability for the management node and admin panel, do the following:

Make sure that each node is connected to a network with the Admin panel and Internal management traffic types.

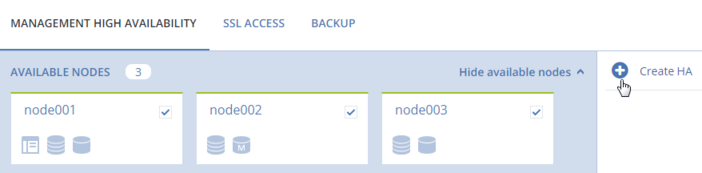

On the SETTINGS > Management node screen, open the MANAGEMENT HIGH AVAILABILITY tab.

Select three nodes and click Create HA. The management node is automatically selected.

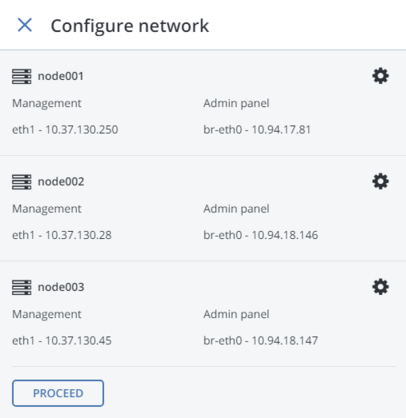

On Configure network, check that correct network interfaces are selected on each node. Otherwise, click the cogwheel icon for a node and assign networks with the Internal management and Admin panel traffic types to its network interfaces. Click PROCEED.

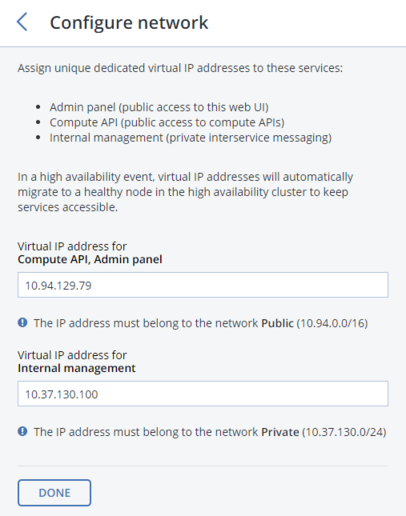

Next, on Configure network, provide one or more unique static IP addresses for the highly available admin panel, compute API endpoint, and interservice messaging. Click DONE.

Once the high availability of the management node is enabled, you can log in to the admin panel at the specified static IP address (on the same port 8888).

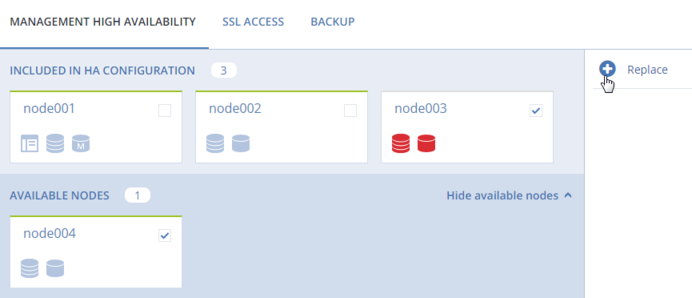

As management node HA must include exactly three nodes at all times, removing a node from the HA configuration is not possible without adding another one at the same time. For example, to remove a failed node from the HA configuration, you can replace it with a healthy one as follows:

On the SETTINGS > Management node > MANAGEMENT HIGH AVAILABILITY tab, select one or two nodes that you wish to remove from the HA configuration and one or two available nodes that will be added into the HA configuration instead and click Replace.

On Configure network, check that correct network interfaces are selected on each node to be added. Otherwise, click the cogwheel icon for a node and assign networks with the Internal management and Admin panel traffic types to its network interfaces. Click PROCEED.

To remove nodes from the HA setup, click Destroy HA.